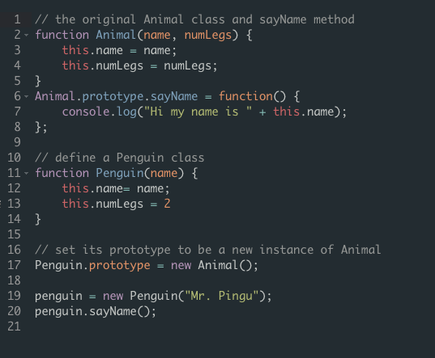

Because moodle might stop working for me soon, I'll keep note of some useful resources I used while auditing a computer science class at Colgate before it's too late.

These resources focus on Java but it really helped me understand overall concepts that are probably shared with all programming languages not just Java (like stack and heap, run time differences, etc.)

Also, in learning new concepts throughout the course I realized that always keeping track of the run times of each bit of code and constantly thinking of how to rewrite it in an effort to shorten the time was one of the most important factors in writing good code. Unfortunately, I thought this was the hardest part and requires a lot of practice. I found a great wikipedia article that lists all of the different types of time complexities in algorithms and detailed explanations of each which everyone should check out!! I'll make a VERY condensed summary though.

Time complexity

Examples

1. constant time (O(1))

An algorithm is in constant time if the value of T(n) (max time) is not determined by the size of input. An example would be accessing a single element in an array because one operation is performed.

2. linear time (O(n))

An algorithm is in linear time if the size of the input determines the run time. The running time increases linearly with the size of input (but usually for very large inputs). In contrast to constant time, finding the minimal value in an unordered array is considered to be in linear time because we need to scan over each element in the array to determine the minimal value. If the array was ordered, it can be considered constant.

3. logarithmic time T(n)=O(log n)

An algorithm is said to take logarithmic time if T(n) = O(log n). Because computers use the binary numeral system the base of the log is generally base 2 (log2 n) but in big-O notation it is usually discarded because the change in base number only changes the runtime by a constant multiplier. Logarithmic time algorithms are usually found in operations on binary trees or binary searches. An O(log n) algorithm is highly time efficient because the operations per instance required decrease with each instance. A simple example would be an algorithm that cuts a string in half.

4. polynomial time T(n) = O(nk) for some constant k

An algorithm is said to be polynomial if its running time is upper bounded by a polynomial expression in the size of the input. Examples of these algorithms are the quicksort and basic arithmetic operations.

These resources focus on Java but it really helped me understand overall concepts that are probably shared with all programming languages not just Java (like stack and heap, run time differences, etc.)

- Java notes

- Algorithms Unlocked - by Weiss. A PDF version of this book can be found by googling it

Also, in learning new concepts throughout the course I realized that always keeping track of the run times of each bit of code and constantly thinking of how to rewrite it in an effort to shorten the time was one of the most important factors in writing good code. Unfortunately, I thought this was the hardest part and requires a lot of practice. I found a great wikipedia article that lists all of the different types of time complexities in algorithms and detailed explanations of each which everyone should check out!! I'll make a VERY condensed summary though.

Time complexity

- quantifies the amount of time an alogrithm needs in order to run

- expressed by big O notation

Examples

1. constant time (O(1))

An algorithm is in constant time if the value of T(n) (max time) is not determined by the size of input. An example would be accessing a single element in an array because one operation is performed.

2. linear time (O(n))

An algorithm is in linear time if the size of the input determines the run time. The running time increases linearly with the size of input (but usually for very large inputs). In contrast to constant time, finding the minimal value in an unordered array is considered to be in linear time because we need to scan over each element in the array to determine the minimal value. If the array was ordered, it can be considered constant.

3. logarithmic time T(n)=O(log n)

An algorithm is said to take logarithmic time if T(n) = O(log n). Because computers use the binary numeral system the base of the log is generally base 2 (log2 n) but in big-O notation it is usually discarded because the change in base number only changes the runtime by a constant multiplier. Logarithmic time algorithms are usually found in operations on binary trees or binary searches. An O(log n) algorithm is highly time efficient because the operations per instance required decrease with each instance. A simple example would be an algorithm that cuts a string in half.

4. polynomial time T(n) = O(nk) for some constant k

An algorithm is said to be polynomial if its running time is upper bounded by a polynomial expression in the size of the input. Examples of these algorithms are the quicksort and basic arithmetic operations.

RSS Feed

RSS Feed